A set of tools has been rolled out by Facebook to help prevent suicide thoughts among its users.

It is now possible to report Facebook posts that identify someone having suicidal thoughts. The reported posts will be reviewed by a third party and then it will decide whether that user should be consulted and counselled or not.

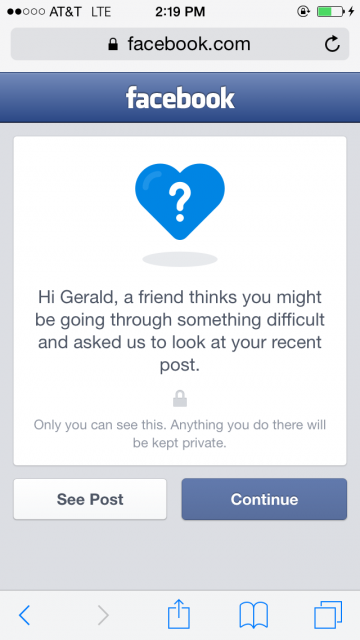

In response to the report, a message will pop up on the Facebook page of that user and it will inform that: “a friend thinks you might be going through something difficult and asked us to look at your recent post.” Only the user having suicidal thoughts will be able to view this message and whatever that follows suite.

SEE ALSO: You can now decide what will happen to your Facebook profile when you die

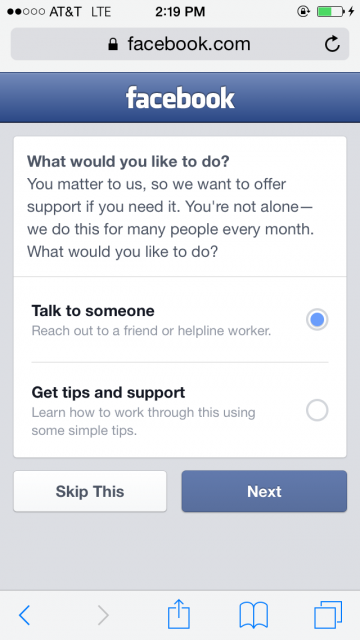

User will be advised to talk to someone, maybe a friend or a helpline worker and/or receive tips and support. The notification will state: You’re not alone — we do this for many people every month.”

Several options follow:

Previously, Facebook has been criticized tremendously for being insensitive. In October 2014, Facebook also had to apologize when it mistakenly labelled transgender people’s names as “fake” in its “real name” policy.

SEE ALSO: Facebook could be developing Anonymous app that will force users to confess their secrets: Report

Facebook has collaborated with mental health organizations for its latest series of alerts to ensure that the right language and statements were being used in its messages to potentially suicidal users.

Some prominent organizations that Facebook partnered with include the National Suicide Prevention lifeline, Forefront, Save.org, Now, Now Matters, and many more.

The product manager at Facebook, Bob Boyle, described this newly launched tool in a video announcement as:

“One of the things that really improve outcomes is increased social connectedness. That’s what we do, is help people connect and help people interact.”